SEMRush is a powerful tool that lets you see how your website performs in traffic and keywords. However, SEMRush can also be a pain for website owners, as it can send out bots that crawl your site and generate a lot of traffic. This can quickly eat up your bandwidth and slow down your site.

Fortunately, there are a few ways to block semrush bots listed below.

Table of Contents

How to block the SEMRush Bot from your website

If you’ve seen a lot of strange traffic coming from semrush.com, you may want to block the semrush bot. Here’s how to block the SEMRush crawler. This will help keep your website safe and secure from unauthorized access.

Use Robots.TXT to block SEMRush Bot

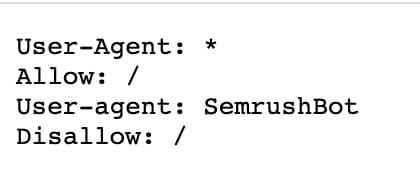

Add the code below to your Robots.txt file. This file tells search engines not to index certain parts of your site, and you can use it to exclude the semrush bot from crawling your site.

User-agent: SemrushBot Disallow: /Download a Robots.TXT template that will block the SEMRush Bot.

Test the SEMRush Bot Block

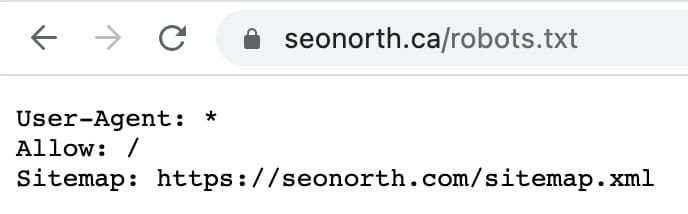

After adding the disallow information to your Robots.TXT file, it’s always a good idea to ensure that the process was done properly.

You can test this by going to your website and adding /robots.txt to the URL.

e.g., example.com/robots.txt

How to find and edit your robots.txt file

The robots.txt file is located in the root directory of your website, and it can be edited using a text editor like CyberDuck. To edit the file, open it in your text editor and make the necessary changes. Be sure to save the file after making any changes, and upload it to your server if necessary. With a few simple edits, you can control how search engine bots index your website and ensure that only the pages you want to be indexed are included in the search results.

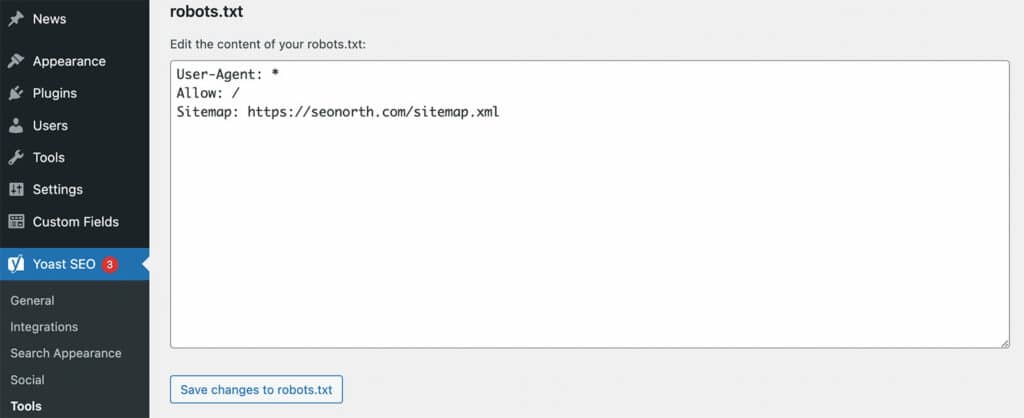

Using WordPress, you can create and edit the robots.txt file using the Yoast plugin. In WordPress, you click on Yoast SEO >> Tools >> File Editor >> robots.txt

* If you do not have a robots.txt file, you can generate one here.

* You must place a robots.txt file on each subdomain if you have subdomains.

How to tell SEMRushBot to slow down crawling your website

This code added to your Robots.txt file tells the bot to crawl one URL every minute.

User-agent: SemrushBot

Crawl-delay: 60

How to block specific SEMRush crawlers

To block SemrushBot from crawling your site from the SEO Technical Audit:

User-agent: SiteAuditBot

Disallow: /To block SemrushBot from crawling your site for the Backlink Audit tool:

User-agent: SemrushBot-BA

Disallow: /To block SemrushBot from crawling your site for the On-Page SEO Checker tool and similar tools:

User-agent: SemrushBot-SI

Disallow: /To block SemrushBot from checking URLs on your site for the SWA tool:

User-agent: SemrushBot-SWA

Disallow: /To block SemrushBot from crawling your site for Content Analyzer and Post Tracking tools:

User-agent: SemrushBot-CT

Disallow: /To block SemrushBot from crawling your site for Brand Monitoring:

User-agent: SemrushBot-BM

Disallow: /To block SplitSignalBot from crawling your site for the SplitSignal tool:

User-agent: SplitSignalBot

Disallow: /To block SemrushBot-COUB from crawling your site for the Content Outline Builder tool:

User-agent: SemrushBot-COUB

Disallow: /Conclusion

Now that you understand how to block the SEMRush Bot and have some tips for improving your website SEO, it’s time to put them into practice. Hiring a professional SEO agency can help take your website to the next level and improve your online presence. Are you ready to get started?

FAQ

What is the SEMrush Bot?

Why Does SEMRush Bot crawl your website?

What does SemrushBot use with the data collected?

How often does SEMRush check your Robots.txt file?

What bot does SEMRush use to crawl?

Published on: 2022-08-20

Updated on: 2024-09-16