It can be very frustrating when you’re trying to crawl a website and suddenly see the timeout or 403 Forbidden error message. What do you do when you encounter the most common on-page messages on your screen: HTTP status code errors, bad requests, internal server errors, or HTTP response “no response” errors? How can you fix it? In this blog post, we’ll explain the 403 Forbidden error and provide some solutions to fix it. Stay tuned!

Table of Contents

What is a 403 Forbidden Error?

The 403 Forbidden status code is a server-side error indicating that the server refuses to fulfill the request. This can be due to several reasons, including but not limited to: the request is being made for a resource that does not exist, the user does not have sufficient permissions to access the requested resource or the server is configured to block requests from the user’s IP address.

Regardless of the reason, a 403 Forbidden error will prevent the user from accessing the requested resource. In some cases, the server may provide a redirect to another page or resource that can be accessed; in other cases, the user will need to contact the website owner or administrator to resolve the issue. Either way, a 403 Forbidden error frustrates any user who encounters it.

How to fix 403 forbidden status code error in Screaming Frog

The page doesn’t exsit

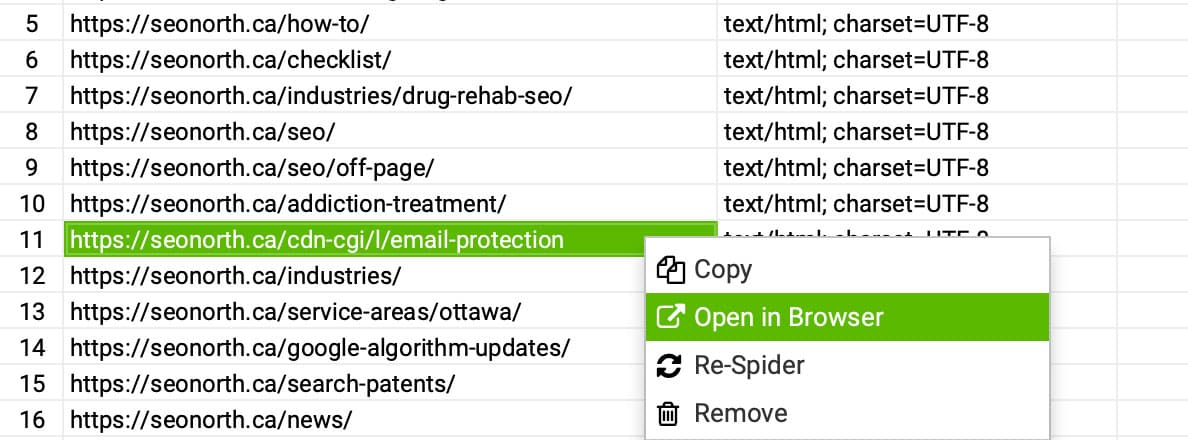

Plausible cause 1: The request is being made for a resource that does not exist

You will need to verify that the web page exists. You can do this by opening the URL in your browser.

This test will show you if the web page is reachable from the browser.

Authentication Required

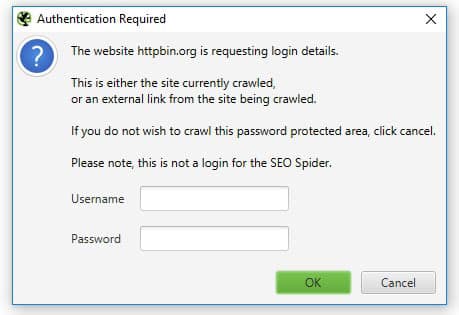

Plausible cause 2: The user does not have sufficient permissions to access the requested resource.

In some cases, the page is password protected. You will need to end the username and password into the Screaming Frog modal popup for it to crawl that page.

The Server is blocking you

Plausible cause 3: The server is configured to block requests from the user’s IP address.

If the webpage is viewable on your computer using a browser, this means the website is blocking the bot (crawler) and is an isolated event.

Lower Crawl Speed

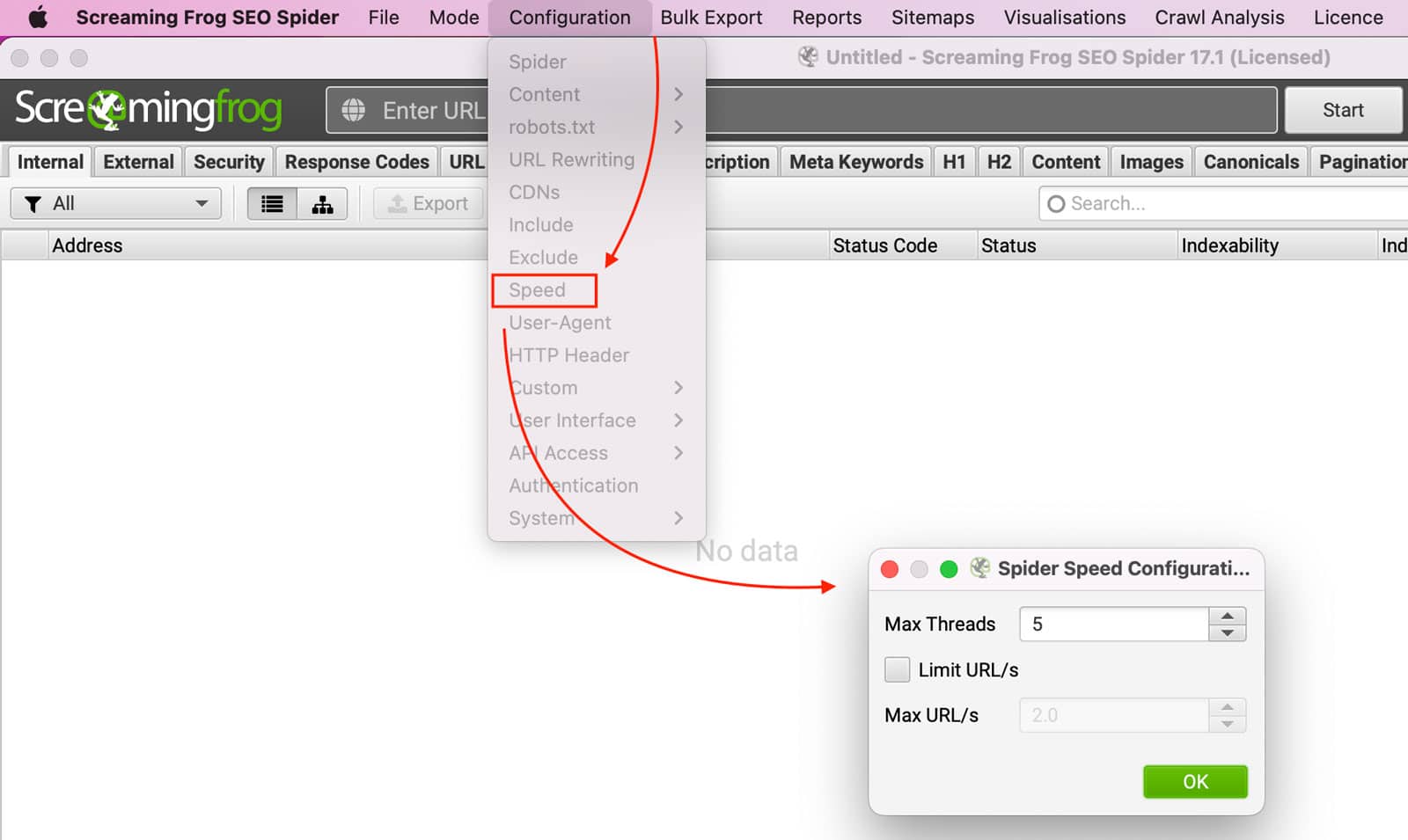

Solution 1: You might have the spider setup to crawl too fast and too aggressively.

To fix aggressive scans, you will need to lower the crawl rate:

Configuration >> Speed >> Spider Speed Configuration

Lowering your crawl rate will increase the time it takes to perform scans and reduce the server resources load.

Change the User-Agent

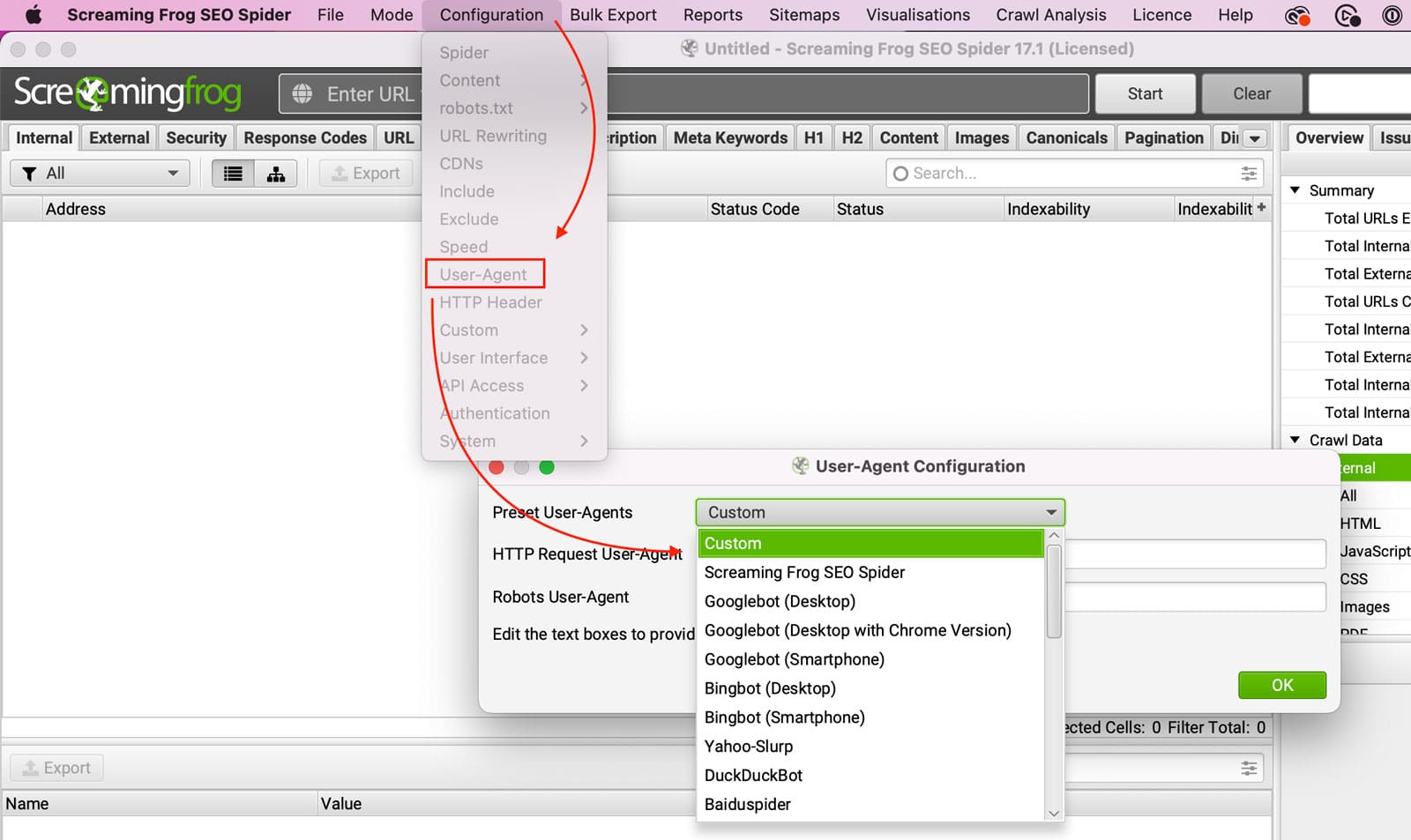

Solution 2: If the website is blocking the Screaming Frog Crawler, change the User-Agent in Screaming Frog.

Configuration >> User-Agent >> Present User-Agents

Some servers are set up to block anything except for a GoogleBot.

Change your IP

Solution 3: Change your IP address

The server could be blocking your IP address, so trying the crawl from a different internet service provider is best.

Try the crawl from home if you are at work and want to change your IP address. If you are at home, try a coffee shop with good internet. If you can’t leave, try tethering your computer to your phone. Changing locations will help you troubleshoot and determine if your IP is blocked.

Whitelist your IP

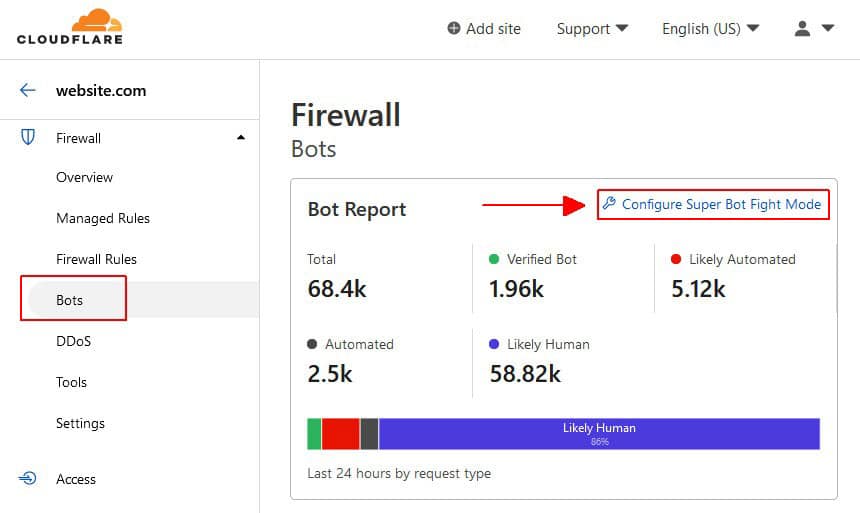

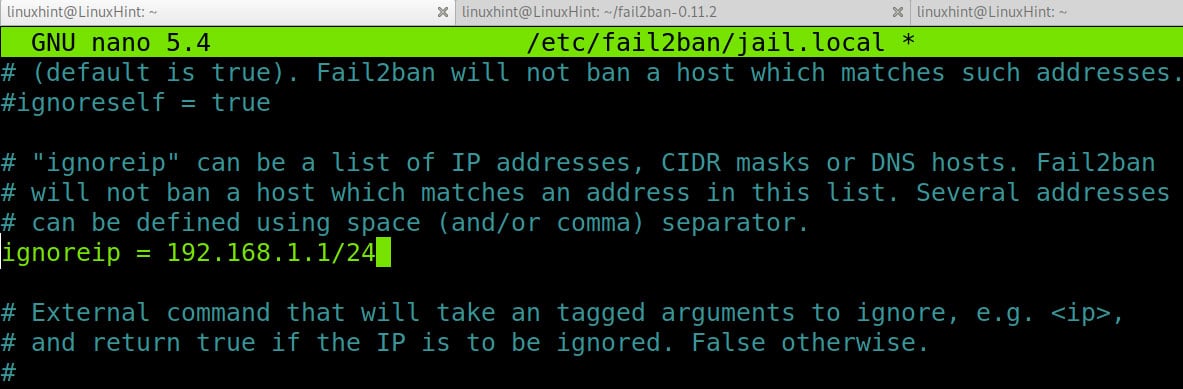

Solution 4: Whitelist your IP address on the Server or Website

You can whitelist your IP address with the website, server, or DNS server where possible.

The options are endless, but a few security services blocking your crawl could be: Wordfence, CloudFlare, and Fail2Ban; if you are unaware of any security software running, it’s best to consult with your IT department.

Conclusion

Encountering a 403 Forbidden error while using Screaming Frog SEO Spider can be a frustrating hurdle in the pursuit of optimizing a website’s performance.

However, armed with an understanding of its causes and solutions, one can effectively navigate through this issue. By addressing potential factors such as non-existent pages, authentication requirements, server restrictions, and crawl speed, users can troubleshoot and resolve this error efficiently.

Additionally, implementing strategies like changing User-Agent, IP address, or whitelisting can further mitigate such obstacles. By leveraging the functionalities within Screaming Frog, users can enhance their technical SEO endeavors, ensuring smoother crawling experiences and facilitating the optimization process.

Remember to verify internal configurations, check robots.txt files, address broken links, and utilize meta tags like canonical and nofollow to bolster search engine visibility. By staying informed and proactive in managing client errors like the 403 Forbidden status code, webmasters can navigate through technical challenges, fostering a more optimized and seamlessly accessible online presence.

For more detailed guidance on addressing technical SEO issues, explore the resources available on screamingfrog.co.uk and utilize tools such as the Response Codes tab within the Screaming Frog interface. With diligence and strategic implementation of solutions, users can overcome hurdles and propel their websites toward greater visibility and success in the digital landscape.

Don’t hesitate to contact us if you still have trouble fixing the 403 Forbidden error. We’ll be more than happy to help out!

FAQ

How many people can use Screaming Frog license?

Published on: 2022-09-01

Updated on: 2024-04-10