TL;DR – Semantic Role Labeling (SRL) in Natural Language Processing is a technique that identifies and assigns roles to words or phrases in a sentence based on their relationship with the main verb or predicate.

In the multifaceted domain of Natural Language Processing (NLP), understanding the relationship between words and their functional roles is paramount. This relationship decoding mechanism is known as Semantic Role Labeling (SRL).

Table of Contents

What is Semantic Role Labeling?

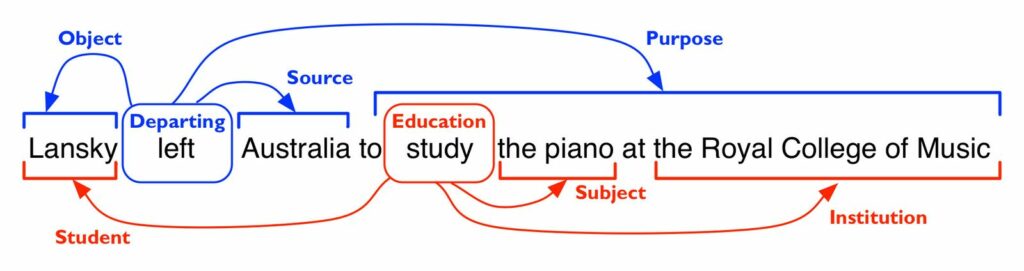

Semantic Role Labeling (SRL) is a technique in NLP where sentences are parsed to identify the predicate-argument structure. It’s a method that extends beyond just syntactic parsing; SRL focuses on extracting the semantic roles (or arguments) that are associated with a predicate within a sentence. This process provides a richer, shallow semantic understanding of the text.

Historical Development and Importance:

The foundations of SRL trace back to the works of renowned researchers like Fillmore, who introduced the idea of “frame-semantic”, and Palmer, who played a significant role in developing the Proposition Bank (PropBank). These initial efforts set the trajectory for the evolution of SRL systems and algorithms.

Datasets like PropBank, with its annotations, and FrameNet, which brings in frame-semantic annotations, have been pivotal. The Penn Treebank also contributed by encoding syntactic structures, providing a solid base for further semantic parsing.

Algorithms and Techniques:

From traditional machine learning methods to state-of-the-art neural networks, several algorithms have been employed in SRL. Jurafsky, Manning, and Gildea have been instrumental in pioneering some of the early algorithms. The application of neural networks, particularly with the advent of models like BERT, has transformed the SRL landscape.

For syntactic parsing, dependency parsing plays a crucial role, with dependency trees assisting in extracting the structure of sentences. In semantic parsing, parsers decipher the predicate-argument structure, furthered by techniques like chunking.

Applications and Use Cases:

Semantic Role Labeling is vital for numerous NLP applications:

- Question Answering: To understand queries and fetch relevant answers.

- Machine Translation: Enhancing the quality by grasping semantic dependencies.

- Disambiguation: Decoding the context by understanding the roles of words in a sentence.

Current State and Advancements:

Events like the CoNLL-2005 shared task: Semantic Role Labeling have fostered advancements in SRL. With artificial intelligence driving NLP research, the Association for Computational Linguistics (ACL) and Empirical Methods in Natural Language Processing (EMNLP) conferences have witnessed novel methodologies, pushing the envelope for SRL.

In recent years, advancements in deep learning have ushered in neural SRL systems. Models like Zhang’s and Carreras’ showcase how neural architectures have significantly improved SRL’s accuracy.

Challenges:

Despite advancements, SRL faces challenges. The nuances of the English language, differentiating between specific roles like Arg1 and Arg2, and training data availability for less-resourced languages remain issues.

Conclusion:

Semantic Role Labeling stands as a testament to the intricacies of human language. As we progress, the confluence of machine learning, artificial intelligence, and linguistic theories will drive SRL to new heights, enhancing our machines’ comprehension of language semantics.

Dive deep into the world of NLP with datasets, annotations, and algorithms, and understand the fabric of language that binds our world. The future of SRL looks promising, with continuous research and advancements shaping the narrative.

FAQ

What is Semantic Role Labelling?

What is an example of Semantic Role Labelling?

How does syntax contribute to Semantic Role Labelling?

What is the role of classifiers in Semantic Role Labelling?

What is the significance of part-of-speech (POS) tagging in Semantic Role Labelling?

How do parse trees contribute to Semantic Role Labelling?

Who are some key researchers and contributions in the field of Semantic Role Labelling?

Published on: 2022-03-28

Updated on: 2024-02-29